Update Thu Jan 10 09:38:42 AKST 2008: Unless you really need a complete mirror like this, a much faster way to achieve something similar is to use Thanassis Tsiodras's Wikipedia Offline method. Templates and other niceties don't work quite as well with his method, but the setup is much, much faster and easier.

I've come to depend on the Wikipedia. Despite potential problems with vandalism, pages without citations, and uneven writing, it's so much better than anything else I have available. And it's a click away.

Except when flooding on the Richardson Highway and a mistake by an Alaska railroad crew cut off Fairbanks from the world. So I've been exploring mirroring the Wikipedia on a laptop. Without images and fulltext searching of article text, it weights in at 7.5 GiB (20061130 dump). If you add the fulltext article search, it's 23 GiB on your hard drive. That's a bit much for a laptop (at least mine), but a desktop could handle it easily. The image dumps aren't being made anymore since many of the images aren't free from Copyright, but even the last dump in November 2005 was 79 GiB. It took about two weeks to download, and I haven't been able to figure out how to integrate it into my existing mirror.

In any case, here's the procedure I used:

Install apache, PHP5, and MySQL. I'm not going to go into detail here, as there are plenty of good tutorials and documentation pages for installing these three things on virtually any platform. I've successfully installed Wikipedia mirrors on OS X and Linux, but there's no reason why this wouldn't work on Windows, since apache, PHP and MySQL are all available for that platform. The only potential problem is that the text table is 6.5 GiB, and some Windows file systems may not be able to handle files larger than 4 GiB (NTFS should be able to handle it, but earlier filesystems like FAT32 probably can't).

Download the latest version of the mediawiki software from http://www.mediawiki.org/wiki/Download (the software links are on the right side of the page).

Create the mediawiki database:

$ mysql -p mysql> create database wikidb; mysql> grant create,select,insert,update,delete,lock tables on wikidb.* to user@localhost identified by 'userpasswd'; mysql> grant all on wikidb.* to admin@localhost identified by 'adminpasswd'; mysql> flush privileges;

Untar the mediawiki software to your web server directory:

$ cd /var/www $ tar xzf ~/mediawiki-1.9.2.tar.gz

Point a web browser to the configuration page, probably something like http://localhost/config/index.php, and fill in the database section with the database name (wikidb) users and passwords from the SQL you typed in earlier. Click the 'install' button. Once that finishes:

$ cd /var/www/ $ mv config/LocalSettings.php . $ rm -rf config/

More detailed instructions for getting mediwiki running are at: http://meta.wikimedia.org/wiki/Help:Installation

Now, get the Wikipedia XML dump from http://download.wikimedia.org/enwiki/. Find the most recent directory that contains a valid pages_articles.xml.bz2 file.

Also download the mwdumper.jar program from http://download.wikimedia.org/tools/. You'll need Java installed to run this program.

Configure your MySQL server to handle the load by editing /etc/mysql/my.cnf, changing the following settings:

[mysqld] max_allowed_packet = 128M innodb_log_file_size = 100M

[mysql] max_allowed_packet = 128M

Restart the server, empty some tables and disable binary logging:

$ sudo /etc/init.d/mysql restart $ mysql -p wikidb mysql> set sql_log_bin=0; mysql> delete from page; mysql> delete from revision; mysql> delete from text;

Now you're ready to load in the Wikipedia dump file. This will take several hours to more than a day, depending on how fast your computer is (a dual 1.8 Ghz Opteron system with 4 GiB of RAM took a little under 17 hours with an average load around 3.0 on the 20061103 dump file). The command is (all on one line):

$ java -Xmx600M -server -jar mwdumper.jar --format=sql:1.5 enwiki-20060925-pages-articles.xml.bz2 | mysql -u admin -p wikidb

You'll use the administrator password you chose earlier. You can also use your own MySQL account, since you created the database, you have all the needed rights.

After this finishes, it's a good idea to make sure there are no errors in the MySQL tables. I normally get a few errors in the pagelinks, templatelinks and page tables. To check the tables for errors:

$ mysqlcheck -p wikidb

If there are tables with errors, you can repair them in two different ways. The first is done inside MySQL and doesn't require shutting down the MySQL server. It's slower, though:

$ mysql -p wikidb mysql> repair table pagelinks extended;

The faster way requires shutting down the MySQL server:

$ sudo /etc/init.d/mysql stop (or however you stop it) $ sudo myisamchk -r -q /var/lib/mysql/wikidb/pagelinks.MYI $ sudo /etc/init.d/mysql start

There are several important extensions to mediawiki that Wikipedia depends on. You can view all of them by going to http://en.wikipedia.org/wiki/Special:Version, which shows everything Wikipedia is currently using. You can get the latest versions of all the extensions with:

$ svn co http://svn.wikimedia.org/svnroot/mediawiki/trunk/extensions extensions

svn is the client command for http://subversion.tigris.org/. It's a revision control system that eliminates most of the issues people had with CVS (and rcs before that). The command above will check out all the extensions code into a new directory on your system named extensions.

The important extensions are the parser functions, citation functions, CategoryTree and WikiHero. Here's how you install these from the extensions directory that svn created.

Parser functions:

$ cd extensions/ParserFunctions

$ mkdir /var/www/extensions/ParserFunctions

$ cp Expr.php ParserFunctions.php SprintfDateCompat.php /var/www/extensions/ParserFunctions

$ cat >> /var/www/LocalSettings.php

require_once("$IP/extensions/ParserFunctions/ParserFunctions.php");

$wgUseTidy = true;

^d

(the last four lines just add those PHP commands to the LocalSettings.php file. It's probably easier to just use a text editor.

Citation functions:

$ cd ../Cite

$ mkdir /var/www/extensions/Cite

$ cp Cite.php Cite.i18n.php /var/www/extensions/Cite/

$ cat >> /var/www/LocalSettings.php

require_once("$IP/extensions/Cite/Cite.php");

^d

CategoryTree:

$ cd ..

$ tar cf - CategoryTree/ | (cd /var/www/extensions/; tar xvf -)

$ cat >> /var/www/LocalSettings.php

$wgUseAjax = true;

require_once("$IP/extensions/CategoryTree/CategoryTree.php");

^d

WikiHero:

$ tar cf - wikihiero | (cd /var/www/extensions/; tar xvf -)

$ cat >> /var/www/LocalSettings.php

require_once("$IP/extensions/wikihiero/wikihiero.php");

^d

If you want the math to show up properly, you'll need to have LaTeX, dvips, convert (from the ImageMagick suite), GhostScript, and an OCaml setup to build the code. Here's how to do it:

$ cd /var/www/math $ make $ mkdir ../images/tmp $ mkdir ../images/math $ sudo chown -R www-data ../images/

My web server runs as user www-data. If yours uses a different account, that's what you'd change the images directories to be owned by. Alternatively, you could use chmod -R 777 ../images to make them writeable by anyone.

Change the $wgUseTeX variable in LocalSettings.php to true. If your Wikimirror is at the root of your web server (as it is in the examples above), you need to make sure that your apache configuration doesn't have an Alias section for images If any of the programs mentioned aren't in the system PATH (like if you installed them in /usr/local/bin or /sw/bin on a Mac) you'll need to put them in /usr/bin or someplace the script can find them.

MediaWiki comes with a variety of maintenance scripts in the maintenance directory. To allow these to function, you need to put the admin user's username and password into AdminSettings.php:

$ mv /var/www/AdminSettings.sample /var/www/AdminSettings.php

and change the values of $wgDBadminuser to admin (or what you really set it to when you created the database and initialized your mediawiki) and $wgDBadminpassword to adminpasswd.

Now, if you want the Search box to search anything besides the titles of articles, you'll need to rebuild the search tables. As I mentioned earlier, these tables make the database grow from 7 GiB to 23 GiB (as of the September 25, 2006 dump), so make sure you've got plenty of space before starting this process. I've found a Wikimirror is pretty useful even without full searching so don't abandon the effort if you don't have 20+ GiB to devote to a mirror.

To rebuild everything:

$ php /var/www/maintenance/rebuildall.php

This script builds the search tables first (which takes several hours), and then moves on to rebuilding the link tables. Rebuilding the link tables takes a very, very long time, but there's no problem breaking out of this process once it starts. I've found that this has a tendency to damage some of the link tables, requiring a repair before you can continue. If that does happen, note the table that was damaged and the index number where the rebuildall.php script failed. Then:

$ mysql -p wikidb mysql> repair table pagelinks extended;

(replace pagelinks with whatever table was damaged.) I've had repairs take a few minutes, to 12 hours, so keep this in mind.

After the table is repaired, edit the /var/www/maintenance/rebuildall.php script, comment out these lines:

# dropTextIndex( $database ); # rebuildTextIndex( $database ); # createTextIndex( $database ); # rebuildRecentChangesTablePass1(); # rebuildRecentChangesTablePass2();

and insert the index number where the previous run crashed into this line:

refreshLinks( 1 );

Then run it again.

One final note: Doing all of these processes on a laptop can be very taxing on a computer that might not be well equipped to handle a full load for days at a time. If you have a desktop computer, you can do the dumping and rebuilding on that computer, and after everything is finished, simply copy the database files from the desktop to your laptop. I just tried this with the 20061130 dump, copying all the MySQL files from /var/lib/mysql/wikidb on a Linux machine to /sw/lib/mysql/wikidb on my MacBook Pro. After the copying was finished, I restarted the MySQL daemon, and the Wikipedia mirror is now live on my laptop. The desktop had MySQL version 5.0.24 and the laptop has 5.0.16. I'm not sure how different these can be for a direct copy to work, but it does work between different platforms (Linux and OS X) and architectures (AMD64 and Intel Duo Core).

image from pinkbelt

I came across The Books because they're similar to Animal Collective (Feels is a great album) in the last.fm similarity diagrams I've been playing with. Lost and Safe is their latest record. The music is hard to describe, but is oddly compelling. Most tracks are combinations of simple string melodies, odd percussion, manipulated vocals and lots of spoken words from random recordings. It's not quite ambient music, and it's also not the sort of thing you can hum while walking to your car in the parking lot. All the layered melodies, strange spoken words, and descending string progressions really bring a lot of emotion to the music.

My favorite tracks on the album are Smells Like Content, An Animated Discription of Mr. Maps, and An Owl With Knees. Smells Like Content and An Owl are both more straightforward than the other tracks, with nice layered melodies and mostly traditional vocals. Mr. Maps is a percussive tour de force and demands to be listened to loud. It's also got lots of spoken word material, including the great line: "Leaving the friendly aroma of doughnuts and chicken tenders hanging in the desert air." There's also someone reading what sounds like the criminal profile of a serial killer, but there isn't enough detail to figure out if it's from a movie or a news recording.

This is certainly not music for everyone. But if your tastes tilt toward the experimental and are looking for a change, give The Books a listen. I'm looking forward to picking up their previous two albums (Thought For Food, The Lemon of Pink) in the near future.

Last weekend I was listening to Enon's High Society with the idea I'd post a review of it here on my blog. Music blogs typically report on the newest, usually not-even-released albums, but I'd rather discuss the stuff I'm listening to and why I like it (or don't).

I listened to each track of the album, and when I was finished, I couldn't really figure the album out because it seemed like it was several different records. The John Schmersal tracks served up a couple musical styles, and those sung by Toko Yasuda seemed totally different. So I didn't post.

Earlier today on good hodgkins he wrote about the same album and said pretty much the same thing, although he identifies only two different styles (Dayton-rock and bass-driven dance-punk) and closes his review with: "This is one of my favorite albums of the decade."

Anyway, here's what I wrote, unedited from that listening session:

Tracks:

Old Dominion -- Pretty much straight up rock with a strong guitar driving the song. A great start to the album.

Count Sheep -- Slower song, more electronic stuff going on in the background. Sharp drums, slow extended guitar parts. Dark feel.

In This City -- Upbeat dancy drums, nice bass and synthesizer melodies in the background really compliment Toko Yasuda's voice. Surprising pop sound after the first two more rock numbers with John Schmersal on vocal.

Window Display -- Another Schmersal tune very much in the Pavement, lazily sung indie-rock mold.

Native Numb -- Strange vocal process effect as another instrument, dark, heavy song. More solid drumming driving the song. Lots going on in the background.

Leave It To Rust -- More mellow song, like Count Sheep with a nice melody.

Disposable Parts -- The second upbeat dancy drum song sung by Yasuda with synthesizers and the processed vocals. Definately a different style than the other songs.

Sold! -- Almost sounds like The Cars with solo vocals to start the song, and other instruments coming in as the song progresses.

Shoulder -- Lots of synthesizer effects, droning guitars and a strong beat backing up Yasuda. Slower than her other songs on the record.

Pleasure and Privilege -- Punk, britpop sound. Great driving drums and loud guitars behind Schmersal's shouted lyrics (and screaming).

Natural Disasters -- Slacker indie sound like Window Display. Not as loud or driven as much of the record.

Carbonation -- Reminds me a bit of Love and Rockets for some reason maybe because of the way Schmersal is singing and the bass driving the song. Great lyrics.

Salty -- Third upbeat dancy song sung by Yasuda, but more rock than dance. Reminds me of a more techno version of Magnapop.

High Society -- More of the slacker indie sound. Maybe it's more about the strength of the vocals in the mix and the slower beat and more acoustic sound that's making me think Pavement. This one has a bunch of strings in it too, and a Morphine-esque saxiphone.

Diamond Raft -- Slow song with a synth loop pulling the song along. Ends before it really begins.

Instruments:

Excellent drumming, nice mixture of guitars, synthesizers and lots of effects. Yasuda has a striking voice. Schmersal can sing straight up rock, punk, as well as slacker-indie.

Overall:Great record, but quite varied in style. If the songs were in a different order you might think you were listening to three (or four!) different bands.

Anyway, I don't think it's one of the best of the decade like Ryan does, but it's pretty damned good if you can get past the variety of styles.

Back in April I wrote about some methods for seeing who is connected to your iTunes library and what they're listening to. Or downloading. There's actually a much easier way to see what's been accessed, at least if you're on a Mac. (Note that this might work under Cygwin on a Windows machine if the Windows filesystem stores access time.)

In Unix all files have three dates associated with them; creation time, last time modified, and last time accessed. You can see all of these times for a particular file by using the stat command. For example, in a terminal window:

$ stat The Jesus Lizard/Down/01 Fly On The Wall.m4a 234881026 2016664 -rw-r--r-- 1 0 4733250 "Aug 22 14:22:57 2006" "May 12 07:45:03 2006" "May 12 07:45:03 2006"

(I've edited the result slightly)

The three times shown are the last accessed, last modified, and the creation date. For this file, you can see that it was accessed earlier today. Since I wasn't playing any music with iTunes today, someone else accessed this file through my shared iTunes library.

There's an easier way to, ahem, find this information:

$ find ~/Music -type f -amin -360

This command shows all the files in the Music directory (where your iTunes music is stored by default) that were accessed in the last 6 hours. Students have just started showing up for the fall semester here at UAF, and when I ran this command at the end of the day, it yielded 451 tracks that other people on campus listened to. I also had my watch_itunes.py Python script running for part of the day, and it was clear from watching, that most of these "listeners" were actually downloading these tracks.

I wonder how long before this becomes a problem for the Recording Industry Association of America? Seems like Apple has created their own P2P filesharing network by allowing iTunes to share tracks in the same network block. Apple can claim it's not their problem because purchased tracks with their FairPlay DRM won't play on anyone else's computer. But what about all those ripped M4A and MP3's files that will play anywhere? It's a free-for-all, thanks to Apple.

Me says to RIAA lawyer: Talk to Apple. I'm just using iTunes, nothing more.

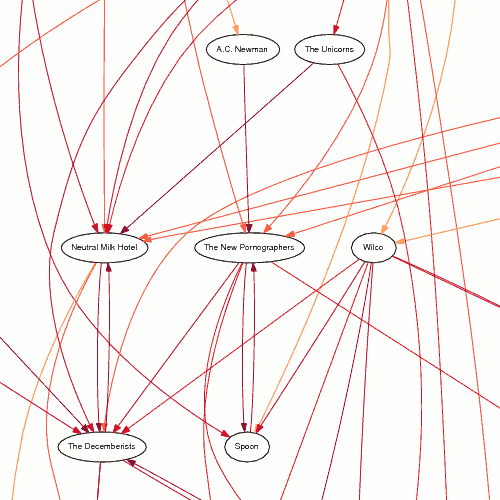

A couple posts ago I showed a hand-made similarity diagram for The Magnetic Fields, where I started at the last.fm similarity page and followed the top two most similar artists until I'd made a diagram. At the time I wondered how hard it would be to generate these things automatically.

Not too hard, it turns out, but it took me several months to get it all working properly. I wrote a Python script that queries the last.fm database, loading and saving the similarity pages for the artists related to the original query. Because it saves the similarities locally, after a few runs there's not much traffic to the last.fm web site.

Once all the data is collected, it generates a text file of links in the DOT language. These files are processed by the graphviz suite of programs (unflatten and dot, in this case) to produce similarity diagrams like the one below (click on the image to download a full-size PDF, 17 KB).

The diagram was produced by:

./build_and_graph.py -a "The Olivia Tremor Control" -c 60 -r 1 \ | unflatten | dot -Tps > /tmp/graph.ps

Click on the image for a PDF version of the entire graph. The darker and redder the lines, the more similar the two artists are. The options passed to my script control what the initial similarity cutoff is, and what the r-value is for the logistic function that controls how similarity changes as you get farther from the initial artist. For these values, the cutoff starts at 60 for the artists directly connected to The Olivia Tremor Control, rises to 80, then 92, 97, 99 and finally 100. That's why the links all get darker as you move down the diagram, moving farther from the original artist at the top.